Amplify / AI picture synthesis advances in 2022 have made photographs love this one potential, which was created the utilization of Steady Diffusion, enhanced with GFPGAN, expanded with DALL-E, after which manually composited collectively.

Benj Edwards / Ars Technica

Greater than as soon as this yr, AI consultants have repeated a acquainted refrain: “Please unhurried down.” AI data in 2022 has been rapidly-fire and relentless; the second you knew the place points at disclose stood in AI, a authentic paper or discovery would develop that figuring out archaic.

In 2022, we arguably hit the knee of the curve when it got here to generative AI that would maybe produce creative works made up of textual content, photographs, audio, and video. This yr, deep-learning AI emerged from a decade of be taught and commenced making its process into industrial features, permitting tons of and tons of of other people to appear at out out the tech for the required time. AI creations impressed marvel, created controversies, precipitated existential crises, and grew to change into heads.

This is a salvage out about encourage on the seven most interesting AI data tales of the yr. It was exhausting to care for easiest seven, nonetheless if we did not scale back it off someplace, we’d quiet be writing about this yr’s occasions successfully into 2023 and past.

April: DALL-E 2 wishes in photographs

Amplify / A DALL-E instance of “an astronaut driving a horse.”

OpenAI

In April, OpenAI introduced DALL-E 2, a deep-learning image-synthesis model that blew minds with its seemingly magical talent to generate photographs from textual content prompts. Skilled on a complete lot of tons of and tons of of photographs pulled from the Internet, DALL-E 2 knew simple the best way to develop authentic mixtures of images on account of a way often called latent diffusion.

Twitter was quickly filled with photographs of astronauts on horseback, teddy bears wandering susceptible Egypt, and different virtually about photorealistic works. We ultimate heard about DALL-E a yr prior when model 1 of the model had struggled to render a low-resolution avocado chair—with out be aware, model 2 was illustrating our wildest wishes at 1024×1024 decision.

Initially, given issues about misuse, OpenAI easiest allowed 200 beta testers to make expend of DALL-E 2. Impart materials filters blocked violent and sexual prompts. Regularly, OpenAI let over 1,000,000 other people staunch right into a closed trial, and DALL-E 2 lastly grew to change into accessible for all people in behind September. Nevertheless by then, one different contender within the latent-diffusion world had risen, as we’ll search data from under.

July: Google engineer thinks LaMDA is sentient

Amplify / Dilapidated Google engineer Blake Lemoine.

Getty Pictures | Washington Put up

In early July, the Washington Put up broke data {that a} Google engineer named Blake Lemoine was place on paid depart linked to his notion that Google’s LaMDA (Language Mannequin for Dialogue Purposes) was sentient—and that it deserved rights equal to a human.

Whereas working as phase of Google’s Accountable AI group, Lemoine started chatting with LaMDA about faith and philosophy and believed he noticed lawful intelligence within the encourage of the textual content. “I do know a specific individual after I overview with it,” Lemoine educated the Put up. “It might not matter whether or not they’ve a thoughts product of meat of their head. Or in the event that they’ve one billion traces of code. I overview with them. And I hear what they’ve to hiss, and that’s how I give attention to what’s and isn’t any longer a specific individual.”

Google spoke again that LaMDA was easiest telling Lemoine what he wished to listen to and that LaMDA was now not, in spite of everything, sentient. Admire the textual content era instrument GPT-3, LaMDA had beforehand been educated on tons of and tons of of books and websites. It responded to Lemoine’s enter (a urged, which accommodates your full textual content of the dialog) by predicting the most likely phrases that should discover with none deeper figuring out.

Alongside the components, Lemoine allegedly violated Google’s confidentiality protection by telling others about his group’s work. Later in July, Google fired Lemoine for violating data safety insurance policies. He was now not the ultimate specific individual in 2022 to just accept swept up within the hype over an AI’s great language model, as we’ll search data from.

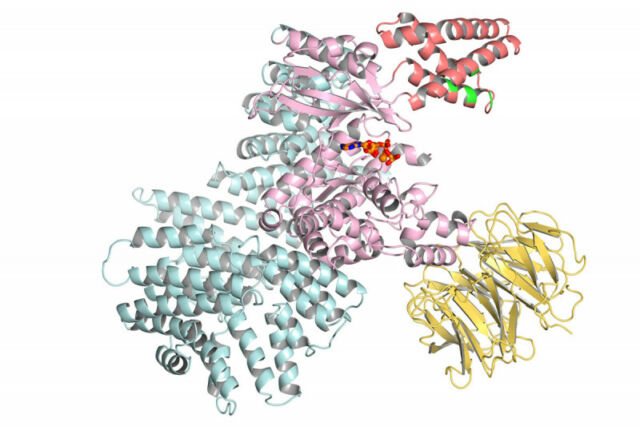

July: DeepMind AlphaFold predicts almost every recognized protein construction

Amplify / Method of protein ribbon gadgets.

In July, DeepMind introduced that its AlphaFold AI model had predicted the form of almost every recognized protein of almost every organism on Earth with a sequenced genome. Initially introduced in the summertime season of 2021, AlphaFold had earlier predicted the form of all human proteins. Nevertheless one yr later, its protein database expanded to have over 200 million protein constructions.

DeepMind made these predicted protein constructions accessible in a public database hosted by the European Bioinformatics Institute on the European Molecular Biology Laboratory (EMBL-EBI), permitting researchers from in every place the realm to entry them and expend the data for be taught linked to remedy and pure science.

Proteins are conventional establishing blocks of existence, and luminous their shapes can wait on scientists modify or alter them. That is available in considerably helpful when rising authentic remedy. “Virtually every drug that has process to market over the previous few years has been designed partly via data of protein constructions,” stated Janet Thornton, a senior scientist and director emeritus at EMBL-EBI. That makes luminous all of them a mountainous deal.